FMSTS: Reinforcement Learning for Variable Selection in Branch and Bound

First RL approach to fully optimize branching strategy in B&B from scratch. Introduces subtree size as a naturally observable Q-function, with a novel Multiplicative Dueling Architecture (MDA) for MILP variable selection.

Authors: Marc Etheve, Zacharie Alès, Côme Bissuel, Olivier Juan, Safia Kedad-Sidhoum

Published: arXiv:2005.10026 — May 20, 2020

Affiliations: EDF R&D · ENSTA Paris (Institut Polytechnique de Paris) · CNAM Paris (CEDRIC)

My role

Senior researcher and industrial problem setter. I provided the EDF unit commitment use case, defined the research direction, and contributed to algorithm design.

Context & Motivation

Mixed-Integer Linear Programming (MILP) underlies a huge range of real-world optimisation problems — from energy scheduling to logistics. The dominant solver paradigm is Branch and Bound (B&B): a divide-and-conquer tree search guided by heuristics for choosing which variable to branch on at each node. The quality of these branching strategies has an outsized effect on solving time, yet commercial solvers rely on hand-crafted rules that are agnostic to the specific structure of recurring industrial problems.

A natural question is: can a learning agent discover a better branching strategy for a given problem family, purely from experience, without imitating any expert policy?

This paper answers yes — and does so rigorously.

Problem Setting

The work targets a common industrial scenario: a company repeatedly solves instances of the same MILP problem with fluctuating data drawn from an unknown distribution \(\mathcal{D}\). Formally, each instance shares the structure

\[p \in \mathcal{P} : \quad \min_{x \in \mathbb{R}^n}\ c^\top x \quad \text{s.t.} \quad Ax \leq b,\; x_\mathcal{J} \in \{0,1\}^{|\mathcal{J}|}\]and the goal is to learn a branching policy \(\Pi^*\) minimising the expected B&B tree size:

\[\Pi^* \in \arg\min_{\Pi}\ \mathbb{E}_{p \sim \mathcal{D}}\bigl[\mu(\Pi(p))\bigr]\]Because the MDP is deterministic (given an instance and a state, any action leads to the same next state), exact Q-values become directly observable after tree expansion — a key property that FMSTS exploits.

The FMSTS Method

1. Subtree Size as Value Function

The central insight is to define \(Q^\pi(s, a)\) as the size of the subtree rooted at state \(s\), generated by action \(a\) then following policy \(\pi\). This choice is:

- Observable — count the nodes once the subtree is fully expanded, no bootstrapping needed.

- Principled — Proposition 2 (proved in the paper) shows that under Depth-First Search, minimising every local subtree size is equivalent to minimising the global tree size. This is the key theoretical result that makes local RL training globally meaningful.

The value function satisfies the Bellman equation:

\[V^\pi(s) = 1 + V^\pi\!\left(D_0^{\pi(s)}(s)\right) + V^\pi\!\left(D_1^{\pi(s)}(s)\right)\]Why DFS? Under Breadth-First Search, subtrees interact through shared primal bounds, breaking the local-to-global consistency (Proposition 1). DFS isolates subtrees, making the consistency hold exactly.

2. Instance-Normalised Loss

The naive squared loss over-weights hard instances (large trees). FMSTS corrects this by weighting each experience by the inverse tree size, so every instance contributes equally:

\[\mathcal{L}(\theta) = \mathbb{E}_{s,a \sim \rho}\!\left[ \frac{1}{V^{\pi_\theta}(r(s))} \Bigl(Q^{\pi_\theta}(s,a) - \hat{Q}(s,a;\theta)\Bigr)^2 \right]\]3. Normalised Prioritized Experience Replay

Q-values scale exponentially along the tree depth and vary strongly across instances. Standard prioritized replay (which uses raw TD errors) would be dominated by deep, large trees. FMSTS normalises the TD error by the target Q-value before computing sampling probabilities:

\[p_j \propto \frac{\left|Q^{\pi_{\theta_j}}(s_j, a_j) - \hat{Q}(s_j, a_j; \theta_j)\right|}{Q^{\pi_{\theta_j}}(s_j, a_j)}\]4. FMSTS Algorithm

for t = 0, …, N-1:

1. Draw a random instance p from the problem distribution.

2. Solve p with ε-greedy policy πθ_t (explore or exploit).

3. Collect (s, a, Q^π(s,a), Q̂(s,a;θ_t)) for every node; store in replay buffer B.

4. Sample a batch from B using normalised prioritized probabilities.

5. Update θ_t → θ_{t+1} by gradient descent on the normalised loss.

Neural Network Architecture

State Representation

| Feature type | Content |

|---|---|

| Static | PCA reduction of concatenated instance data \((A, b, c)\) |

| Dynamic | Node depth · primal–dual gap · branching state (3|J|-dim one-hot) |

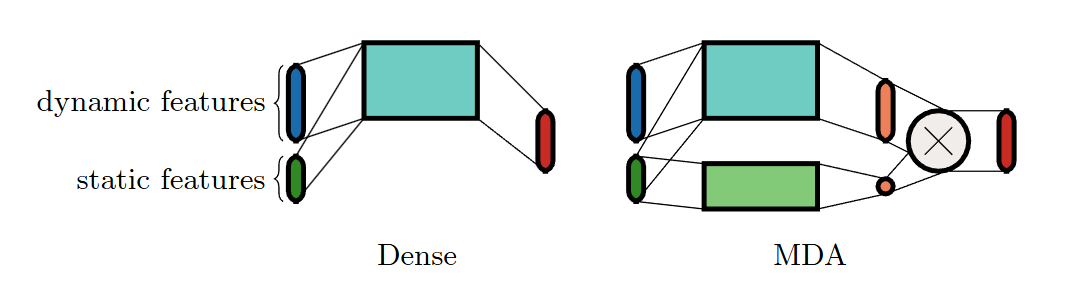

Multiplicative Dueling Architecture (MDA)

The B&B Q-function is inherently multiplicative: each level down the tree, the subtree size roughly halves. Additive (summation-based) networks like standard Dueling DQN struggle with this. MDA fixes the problem by:

- Using a product instead of a sum between the two branches.

- Using a linear (not ReLU) activation on the scalar branch, so the network can represent both growth and decay of value across tree depths.

Experiments

Benchmark Problems (EDF industrial instances)

| Problem | Constraints | Variables | Binary vars | Domain |

|---|---|---|---|---|

| P1 | 186 | 120 | 54 | Microgrid energy management |

| P2 | 282 | 207 | 96 | Hydroelectric valley optimisation |

Evaluation Protocol

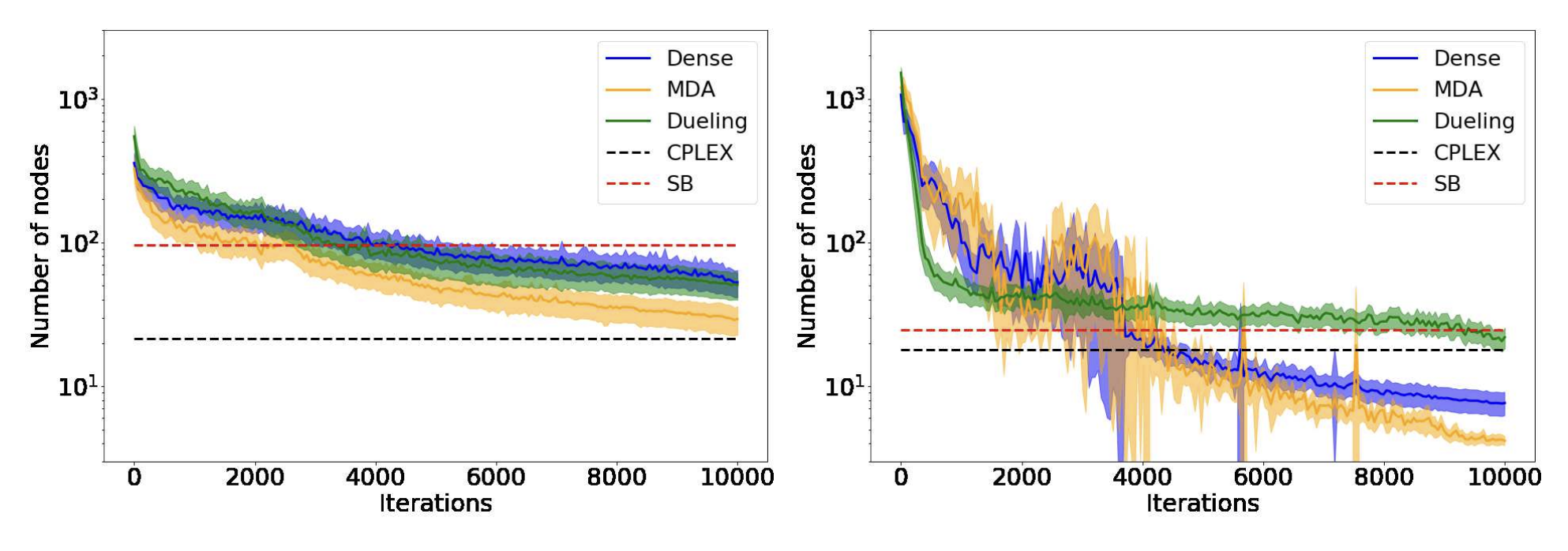

100-fold cross-validation · 200 training instances · 500 test instances per fold · metric: average number of B&B nodes (log scale) on test set throughout training. Baselines: CPLEX 12.7.1 (default branching under DFS, no presolve/cuts) and full Strong Branching (SB).

Results

Key takeaways:

- MDA > Dueling > Dense — architecture design is critical; the multiplicative structure of the value function must be matched by the network.

- No overfitting — agents generalise well to unseen instances from the same distribution.

- Negligible inference cost — selecting a branch requires only a neural network forward pass, vs. solving multiple LP relaxations for Strong Branching.

Theoretical Contributions

Proposition 1 (negative result): Minimising local subtree sizes is not globally optimal in general (e.g., under Breadth-First Search). This motivates the DFS requirement.

Proposition 2 (positive result, proved): Under DFS, a branching policy minimises the global B&B tree size if and only if it minimises every subtree size locally. This establishes that local RL training directly optimises the global objective — no reward engineering needed.

Limitations & Future Directions

Current limitations:

- Requires Depth-First Search; other node-selection strategies break the optimality guarantee.

- Training on hard instances is computationally expensive (random init → exponential trees).

- Feature set is intentionally minimal; richer representations would likely improve results.

Promising extensions:

- Supervised pre-training (warm start from imitation learning) to tame early exploration.

- Hierarchical action space — select among branching heuristics rather than raw variables.

- Graph-based state representations (e.g., bipartite constraint–variable graphs).

- Branch-and-Cut extension, incorporating cut selection into the learned policy.

- Alternative metrics — the framework is metric-agnostic; simplex iterations or wall-clock time are direct drop-in replacements for tree size.

Citation

@article{etheve2020reinforcement,

title = {Reinforcement Learning for Variable Selection in a Branch and Bound Algorithm},

author = {Etheve, Marc and Al{\`e}s, Zacharie and Bissuel, C{\^o}me

and Juan, Olivier and Kedad-Sidhoum, Safia},

journal = {arXiv preprint arXiv:2005.10026},

year = {2020}

}

arXiv: arXiv:2005.10026